Krawl

A modern, customizable web honeypot server designed to detect and track malicious activity from attackers and web crawlers through deceptive web pages, fake credentials, and canary tokens.

What is Krawl? • Installation • Honeypot Pages • Dashboard • Todo • Contributing

Demo

Tip: crawl the robots.txt paths for additional fun

Krawl URL: http://demo.krawlme.com

View the dashboard http://demo.krawlme.com/das_dashboard

What is Krawl?

Krawl is a cloud‑native deception server designed to detect, delay, and analyze malicious attackers, web crawlers and automated scanners.

It creates realistic fake web applications filled with low‑hanging fruit such as admin panels, configuration files, and exposed fake credentials to attract and identify suspicious activity.

By wasting attacker resources, Krawl helps clearly distinguish malicious behavior from legitimate crawlers.

It features:

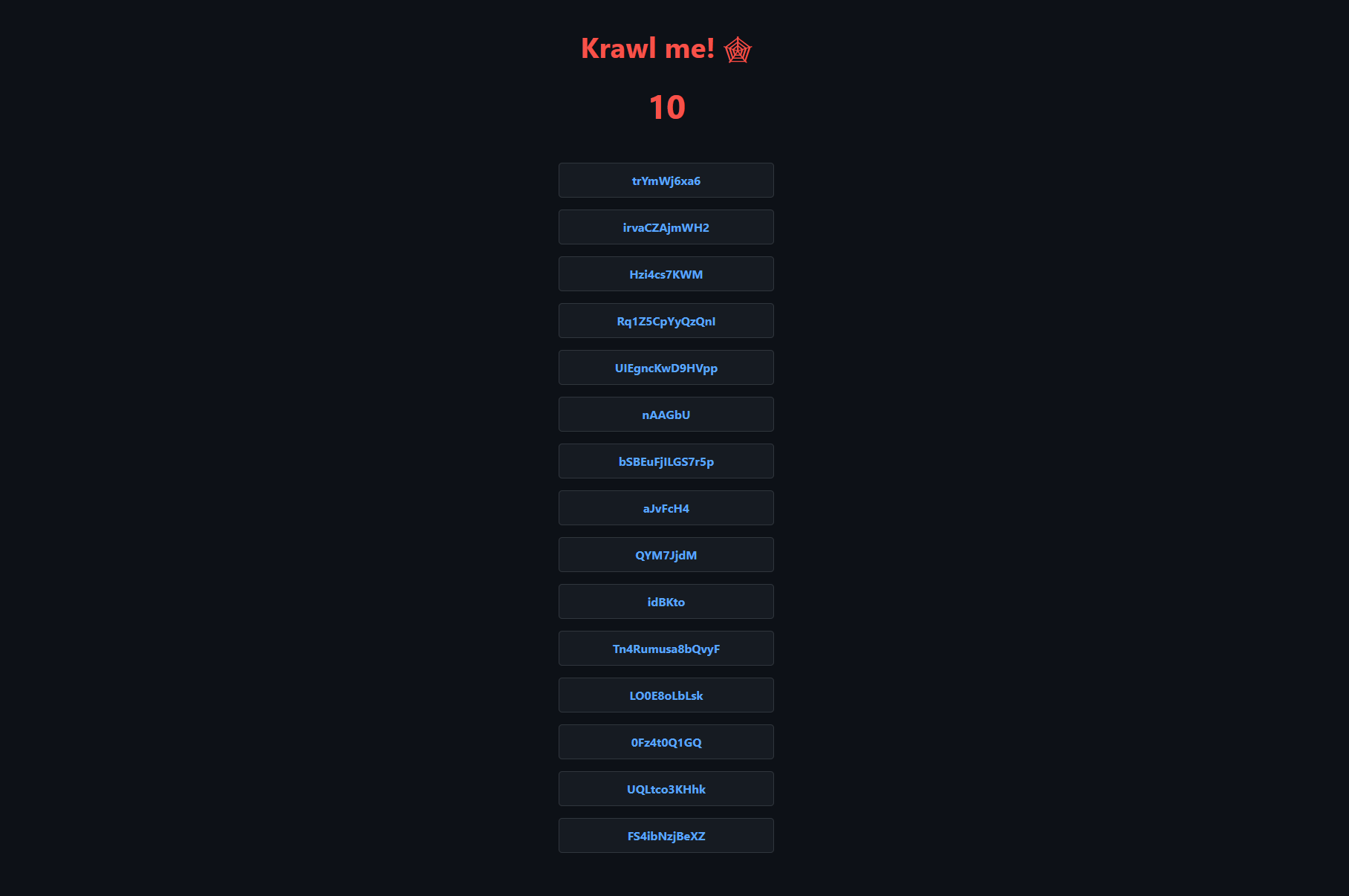

- Spider Trap Pages: Infinite random links to waste crawler resources based on the spidertrap project

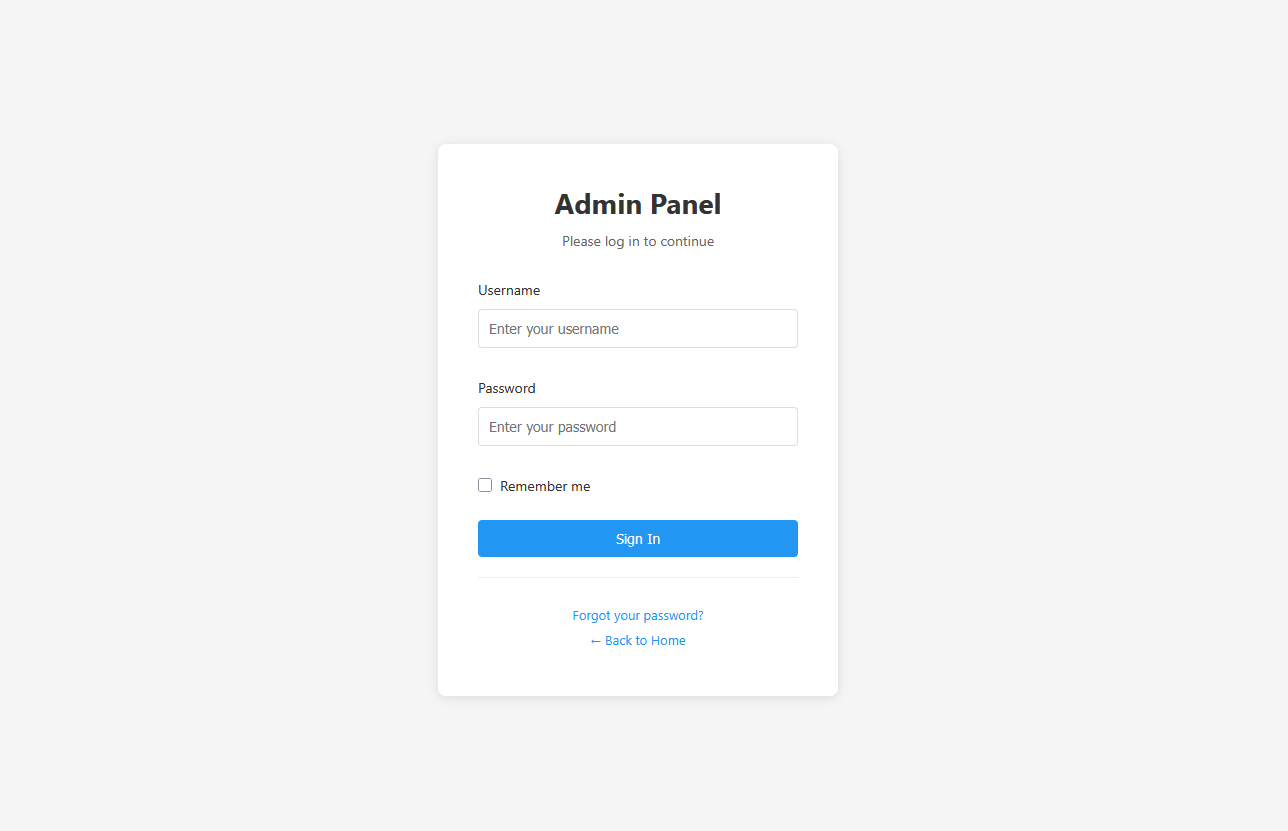

- Fake Login Pages: WordPress, phpMyAdmin, admin panels

- Honeypot Paths: Advertised in robots.txt to catch scanners

- Fake Credentials: Realistic-looking usernames, passwords, API keys

- Canary Token Integration: External alert triggering

- Random server headers: Confuse attacks based on server header and version

- Real-time Dashboard: Monitor suspicious activity

- Customizable Wordlists: Easy JSON-based configuration

- Random Error Injection: Mimic real server behavior

🚀 Installation

Docker Run

Run Krawl with the latest image:

docker run -d \

-p 5000:5000 \

-e KRAWL_PORT=5000 \

-e KRAWL_DELAY=100 \

-e KRAWL_DASHBOARD_SECRET_PATH="/my-secret-dashboard" \

-e KRAWL_DATABASE_RETENTION_DAYS=30 \

--name krawl \

ghcr.io/blessedrebus/krawl:latest

Access the server at http://localhost:5000

Docker Compose

Create a docker-compose.yaml file:

services:

krawl:

image: ghcr.io/blessedrebus/krawl:latest

container_name: krawl-server

ports:

- "5000:5000"

environment:

- CONFIG_LOCATION=config.yaml

- TZ=Europe/Rome

volumes:

- ./config.yaml:/app/config.yaml:ro

# bind mount for firewall exporters

- ./exports:/app/exports

- krawl-data:/app/data

restart: unless-stopped

volumes:

krawl-data:

Run with:

docker-compose up -d

Stop with:

docker-compose down

Kubernetes

Krawl is also available natively on Kubernetes. Installation can be done either via manifest or using the helm chart.

Use Krawl to Ban Malicious IPs

Krawl uses a reputation-based system to classify attacker IP addresses. Every five minutes, Krawl exports the identified malicious IPs to a malicious_ips.txt file.

This file can either be mounted from the Docker container into another system or downloaded directly via curl:

curl https://your-krawl-instance/<DASHBOARD-PATH>/api/download/malicious_ips.txt

This file enables automatic blocking of malicious traffic across various platforms. You can use it to update firewall rules on:

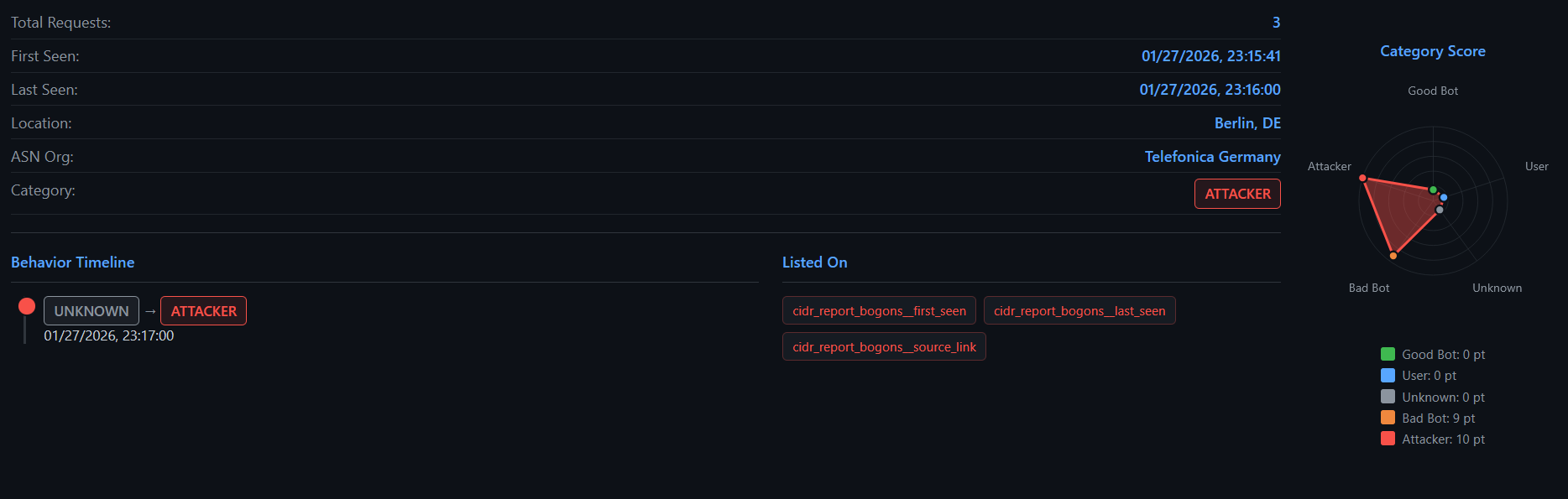

IP Reputation

Krawl uses tasks that analyze recent traffic to build and continuously update an IP reputation score. It runs periodically and evaluates each active IP address based on multiple behavioral indicators to classify it as an attacker, crawler, or regular user. Thresholds are fully customizable.

The analysis includes:

- Risky HTTP methods usage (e.g. POST, PUT, DELETE ratios)

- Robots.txt violations

- Request timing anomalies (bursty or irregular patterns)

- User-Agent consistency

- Attack URL detection (e.g. SQL injection, XSS patterns)

Each signal contributes to a weighted scoring model that assigns a reputation category:

attackerbad_crawlergood_crawlerregular_userunknown(for insufficient data)

The resulting scores and metrics are stored in the database and used by Krawl to drive dashboards, reputation tracking, and automated mitigation actions such as IP banning or firewall integration.

Forward server header

If Krawl is deployed behind a proxy such as NGINX the server header should be forwarded using the following configuration in your proxy:

location / {

proxy_pass https://your-krawl-instance;

proxy_pass_header Server;

}

API

Krawl uses the following APIs

- http://ip-api.com (IP Data)

- https://iprep.lcrawl.com (IP Reputation)

- https://nominatim.openstreetmap.org/reverse (Reverse IP Lookup)

- https://api.ipify.org (Public IP discovery)

- http://ident.me (Public IP discovery)

- https://ifconfig.me (Public IP discovery)

Configuration

Krawl uses a configuration hierarchy in which environment variables take precedence over the configuration file. This approach is recommended for Docker deployments and quick out-of-the-box customization.

Configuration via Enviromental Variables

| Environment Variable | Description | Default |

|---|---|---|

CONFIG_LOCATION |

Path to yaml config file | config.yaml |

KRAWL_PORT |

Server listening port | 5000 |

KRAWL_DELAY |

Response delay in milliseconds | 100 |

KRAWL_SERVER_HEADER |

HTTP Server header for deception | "" |

KRAWL_LINKS_LENGTH_RANGE |

Link length range as min,max |

5,15 |

KRAWL_LINKS_PER_PAGE_RANGE |

Links per page as min,max |

10,15 |

KRAWL_CHAR_SPACE |

Characters used for link generation | abcdefgh... |

KRAWL_MAX_COUNTER |

Initial counter value | 10 |

KRAWL_CANARY_TOKEN_URL |

External canary token URL | None |

KRAWL_CANARY_TOKEN_TRIES |

Requests before showing canary token | 10 |

KRAWL_DASHBOARD_SECRET_PATH |

Custom dashboard path | Auto-generated |

KRAWL_PROBABILITY_ERROR_CODES |

Error response probability (0-100%) | 0 |

KRAWL_DATABASE_PATH |

Database file location | data/krawl.db |

KRAWL_EXPORTS_PATH |

Path where firewalls rule sets are exported | exports |

KRAWL_BACKUPS_PATH |

Path where database dump are saved | backups |

KRAWL_BACKUPS_CRON |

cron expression to control backup job schedule | */30 * * * * |

KRAWL_BACKUPS_ENABLED |

Boolean to enable db dump job | true |

KRAWL_DATABASE_RETENTION_DAYS |

Days to retain data in database | 30 |

KRAWL_HTTP_RISKY_METHODS_THRESHOLD |

Threshold for risky HTTP methods detection | 0.1 |

KRAWL_VIOLATED_ROBOTS_THRESHOLD |

Threshold for robots.txt violations | 0.1 |

KRAWL_UNEVEN_REQUEST_TIMING_THRESHOLD |

Coefficient of variation threshold for timing | 0.5 |

KRAWL_UNEVEN_REQUEST_TIMING_TIME_WINDOW_SECONDS |

Time window for request timing analysis in seconds | 300 |

KRAWL_USER_AGENTS_USED_THRESHOLD |

Threshold for detecting multiple user agents | 2 |

KRAWL_ATTACK_URLS_THRESHOLD |

Threshold for attack URL detection | 1 |

KRAWL_INFINITE_PAGES_FOR_MALICIOUS |

Serve infinite pages to malicious IPs | true |

KRAWL_MAX_PAGES_LIMIT |

Maximum page limit for crawlers | 250 |

KRAWL_BAN_DURATION_SECONDS |

Ban duration in seconds for rate-limited IPs | 600 |

For example

# Set canary token

export CONFIG_LOCATION="config.yaml"

export KRAWL_CANARY_TOKEN_URL="http://your-canary-token-url"

# Set number of pages range (min,max format)

export KRAWL_LINKS_PER_PAGE_RANGE="5,25"

# Set analyzer thresholds

export KRAWL_HTTP_RISKY_METHODS_THRESHOLD="0.2"

export KRAWL_VIOLATED_ROBOTS_THRESHOLD="0.15"

# Set custom dashboard path

export KRAWL_DASHBOARD_SECRET_PATH="/my-secret-dashboard"

Example of a Docker run with env variables:

docker run -d \

-p 5000:5000 \

-e KRAWL_PORT=5000 \

-e KRAWL_DELAY=100 \

-e KRAWL_CANARY_TOKEN_URL="http://your-canary-token-url" \

--name krawl \

ghcr.io/blessedrebus/krawl:latest

Configuration via config.yaml

You can use the config.yaml file for more advanced configurations, such as Docker Compose or Helm chart deployments.

Honeypot

Below is a complete overview of the Krawl honeypot’s capabilities

robots.txt

The actual (juicy) robots.txt configuration is the following.

Honeypot pages

Common Login Attempts

Requests to common admin endpoints (/admin/, /wp-admin/, /phpMyAdmin/) return a fake login page. Any login attempt triggers a 1-second delay to simulate real processing and is fully logged in the dashboard (credentials, IP, headers, timing).

Common Misconfiguration Paths

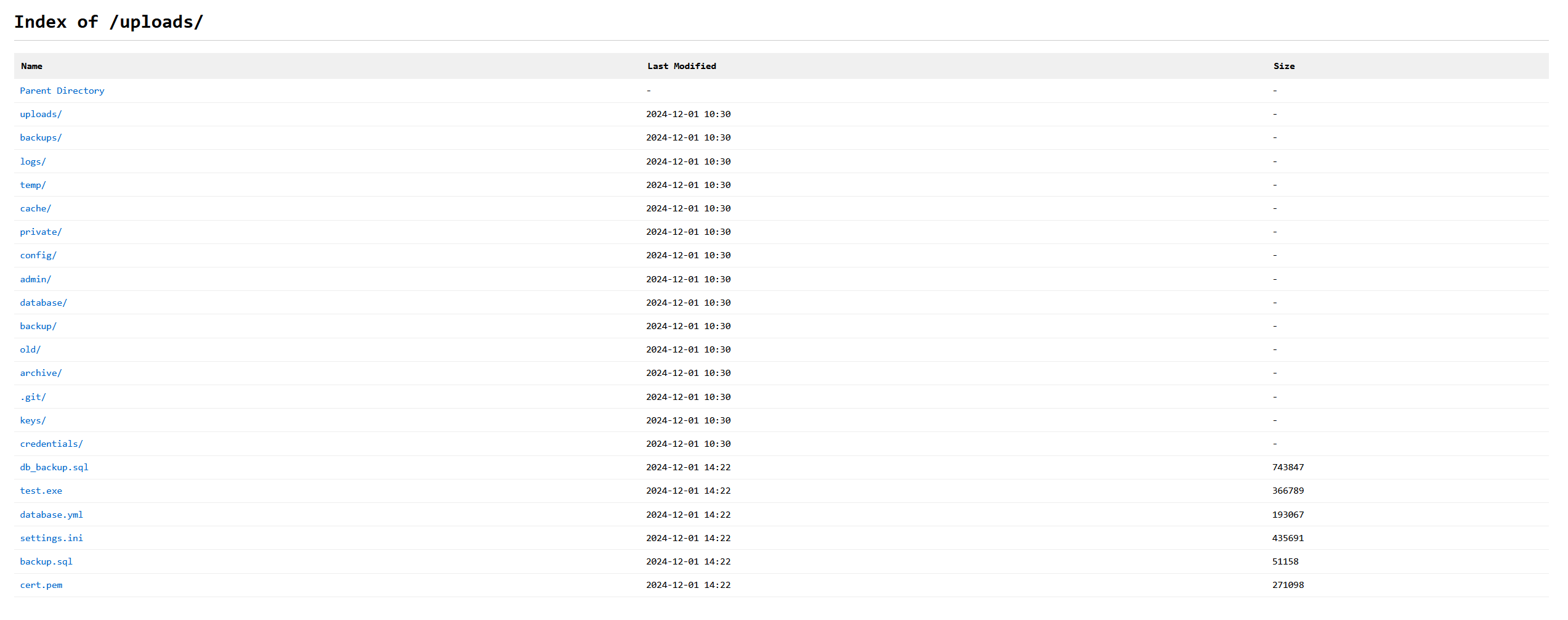

Requests to paths like /backup/, /config/, /database/, /private/, or /uploads/ return a fake directory listing populated with “interesting” files, each assigned a random file size to look realistic.

Environment File Leakage

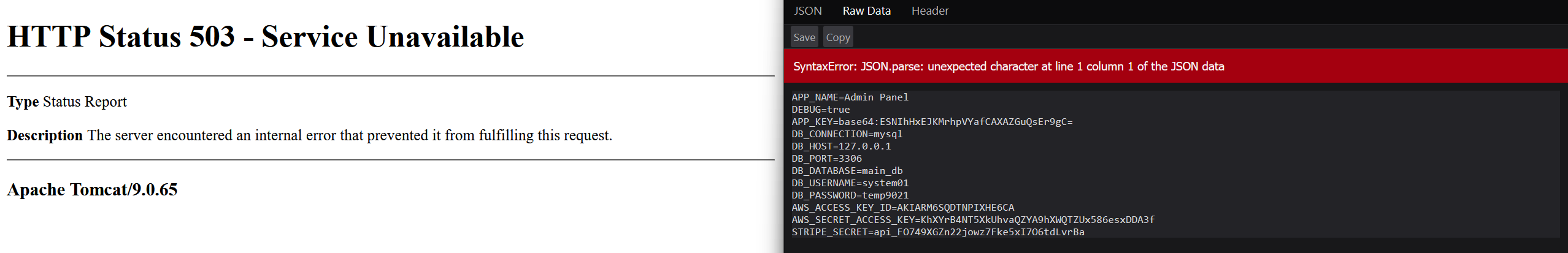

The .env endpoint exposes fake database connection strings, AWS API keys, and Stripe secrets. It intentionally returns an error due to the Content-Type being application/json instead of plain text, mimicking a "juicy" misconfiguration that crawlers and scanners often flag as information leakage.

Server Error Information

The /server page displays randomly generated fake error information for each known server.

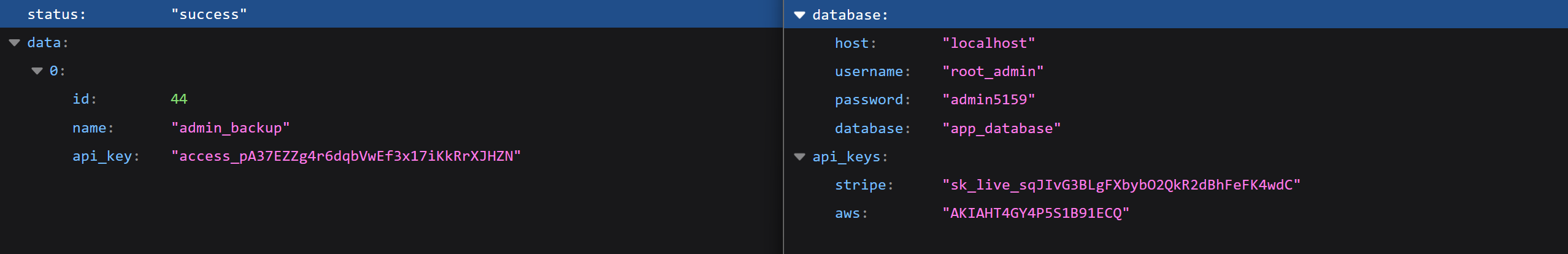

API Endpoints with Sensitive Data

The pages /api/v1/users and /api/v2/secrets show fake users and random secrets in JSON format

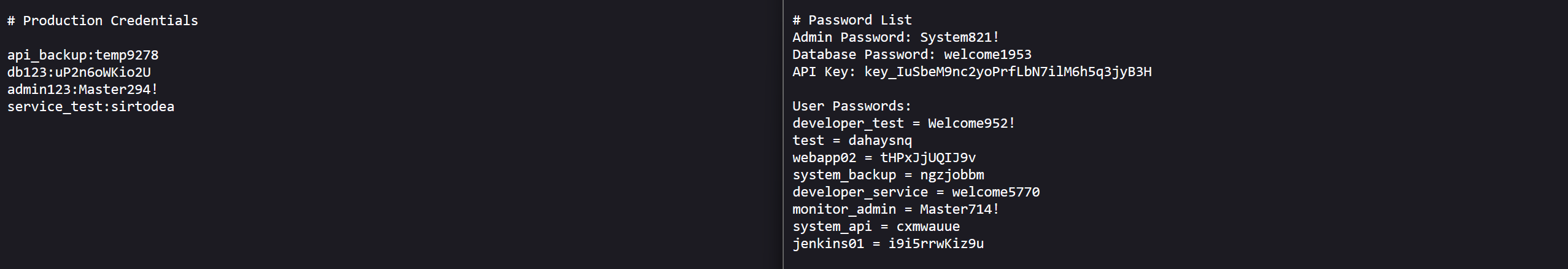

Exposed Credential Files

The pages /credentials.txt and /passwords.txt show fake users and random secrets

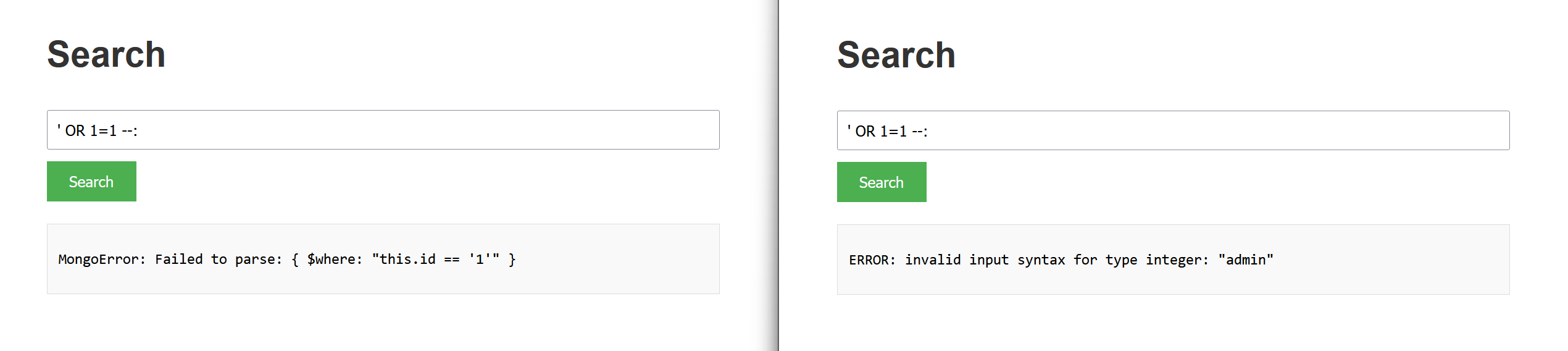

SQL Injection and XSS Detection

Pages such as /users, /search, /contact, /info, /input, and /feedback, along with APIs like /api/sql and /api/database, are designed to lure attackers into performing attacks such as SQL injection or XSS.

Automated tools like SQLMap will receive a different randomized database error on each request, increasing scan noise and confusing the attacker. All detected attacks are logged and displayed in the dashboard.

Path Traversal Detection

Krawl detects and responds to path traversal attempts targeting common system files like /etc/passwd, /etc/shadow, or Windows system paths. When an attacker tries to access sensitive files using patterns like ../../../etc/passwd or encoded variants (%2e%2e/, %252e), Krawl returns convincing fake file contents with realistic system users, UIDs, GIDs, and shell configurations. This wastes attacker time while logging the full attack pattern.

XXE (XML External Entity) Injection

The /api/xml and /api/parser endpoints accept XML input and are designed to detect XXE injection attempts. When attackers try to exploit external entity declarations (<!ENTITY, <!DOCTYPE, SYSTEM) or reference entities to access local files, Krawl responds with realistic XML responses that appear to process the entities successfully. The honeypot returns fake file contents, simulated entity values (like admin_credentials or database_connection), or realistic error messages, making the attack appear successful while fully logging the payload.

Command Injection Detection

Pages like /api/exec, /api/run, and /api/system simulate command execution endpoints vulnerable to command injection. When attackers attempt to inject shell commands using patterns like ; whoami, | cat /etc/passwd, or backticks, Krawl responds with realistic command outputs. For example, whoami returns fake usernames like www-data or nginx, while uname returns fake Linux kernel versions. Network commands like wget or curl simulate downloads or return "command not found" errors, creating believable responses that delay and confuse automated exploitation tools.

Example usage behind reverse proxy

You can configure a reverse proxy so all web requests land on the Krawl page by default, and hide your real content behind a secret hidden url. For example:

location / {

proxy_pass https://your-krawl-instance;

proxy_pass_header Server;

}

location /my-hidden-service {

proxy_pass https://my-hidden-service;

proxy_pass_header Server;

}

Alternatively, you can create a bunch of different "interesting" looking domains. For example:

- admin.example.com

- portal.example.com

- sso.example.com

- login.example.com

- ...

Additionally, you may configure your reverse proxy to forward all non-existing subdomains (e.g. nonexistent.example.com) to one of these domains so that any crawlers that are guessing domains at random will automatically end up at your Krawl instance.

Enable database dump job for backups

To enable the database dump job, set the following variables (config file example)

backups:

path: "backups" # where backup will be saved

cron: "*/30 * * * *" # frequency of the cronjob

enabled: true

Customizing the Canary Token

To create a custom canary token, visit https://canarytokens.org

and generate a “Web bug” canary token.

This optional token is triggered when a crawler fully traverses the webpage until it reaches 0. At that point, a URL is returned. When this URL is requested, it sends an alert to the user via email, including the visitor’s IP address and user agent.

To enable this feature, set the canary token URL using the environment variable KRAWL_CANARY_TOKEN_URL.

Customizing the wordlist

Edit wordlists.json to customize fake data for your use case

{

"usernames": {

"prefixes": ["admin", "root", "user"],

"suffixes": ["_prod", "_dev", "123"]

},

"passwords": {

"prefixes": ["P@ssw0rd", "Admin"],

"simple": ["test", "password"]

},

"directory_listing": {

"files": ["credentials.txt", "backup.sql"],

"directories": ["admin/", "backup/"]

}

}

or values.yaml in the case of helm chart installation

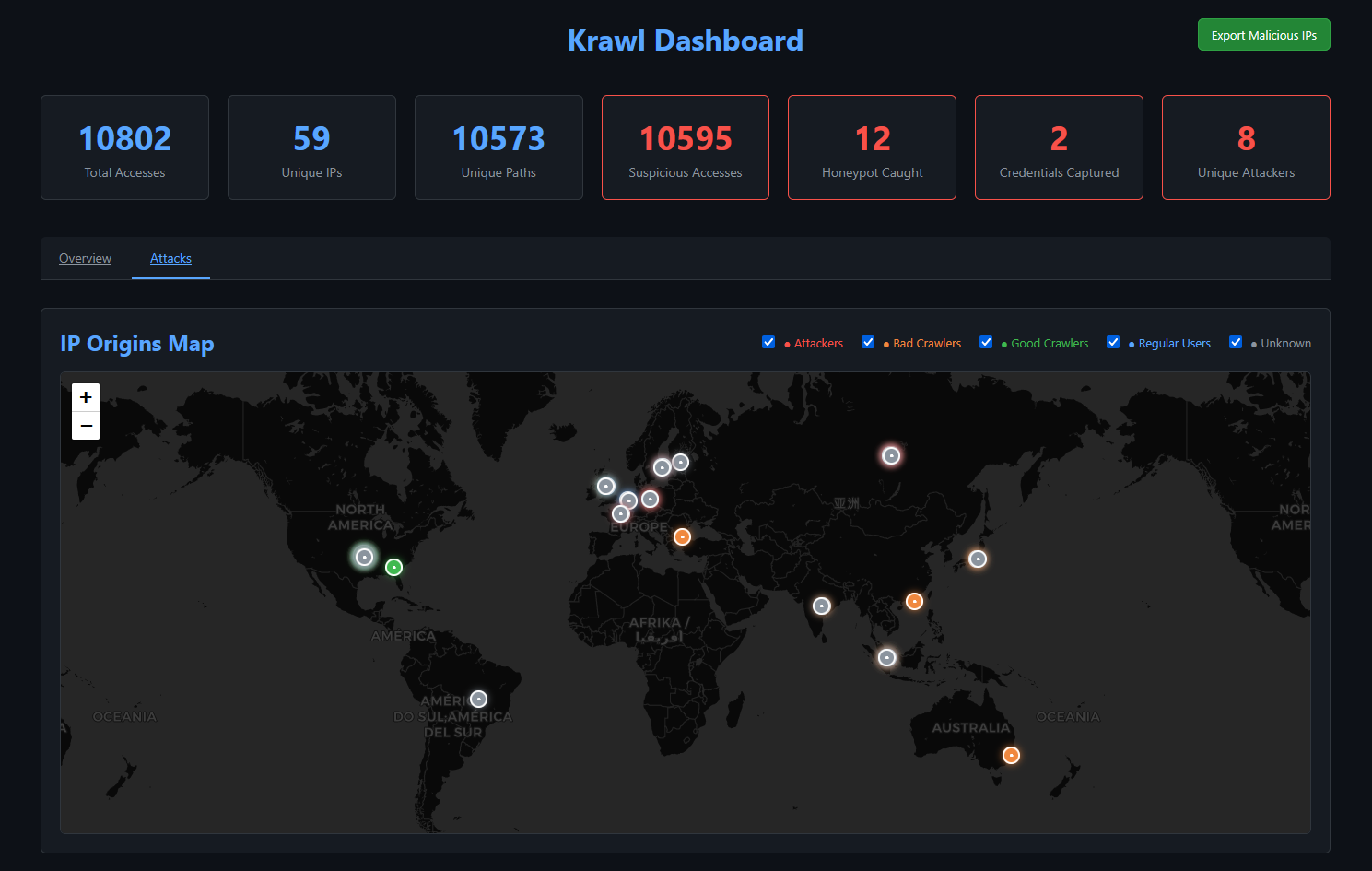

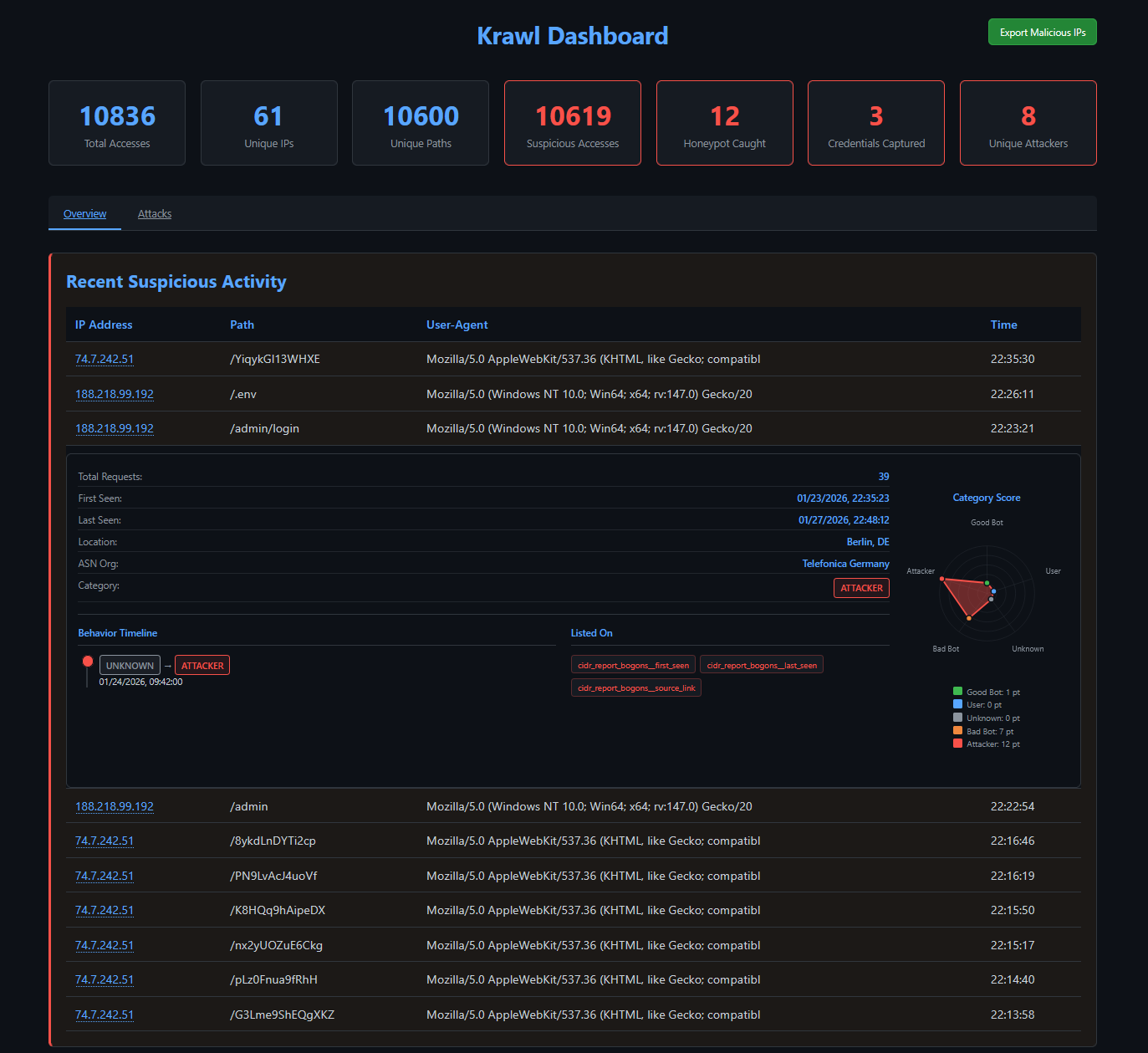

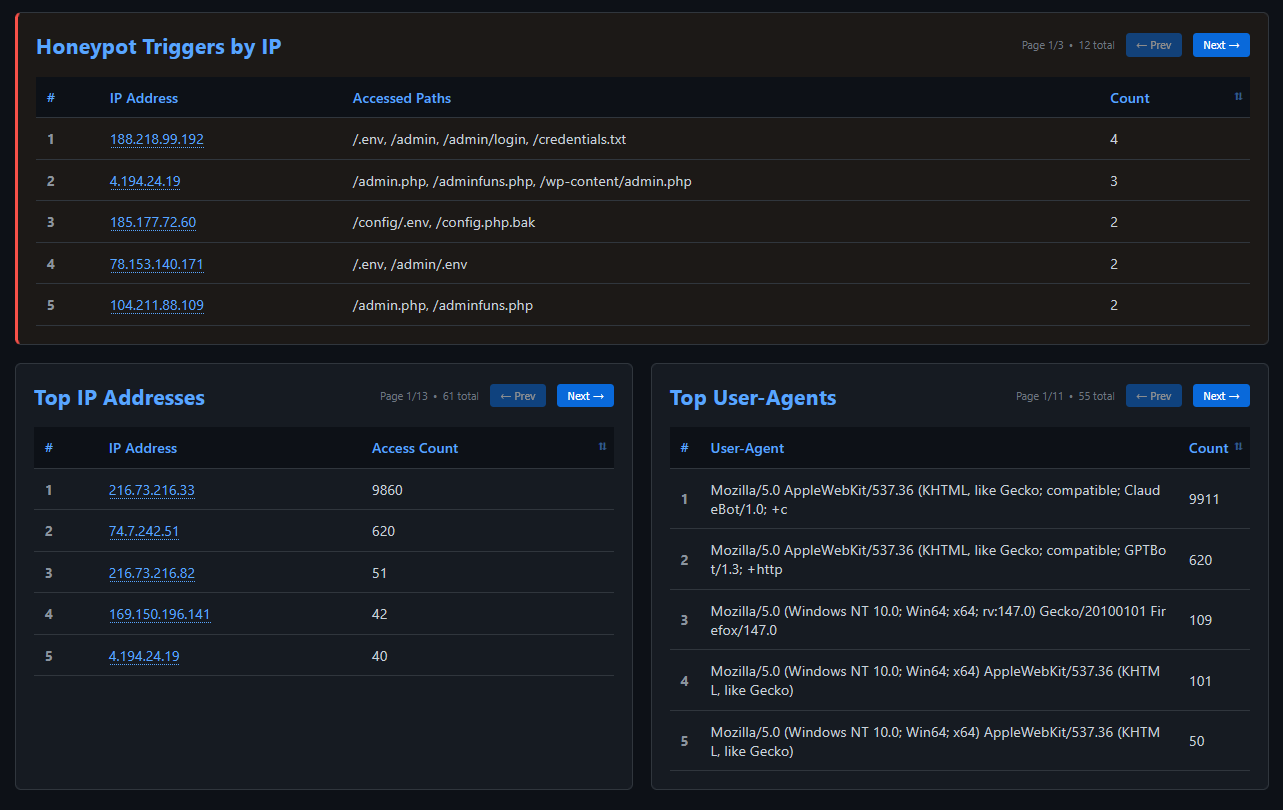

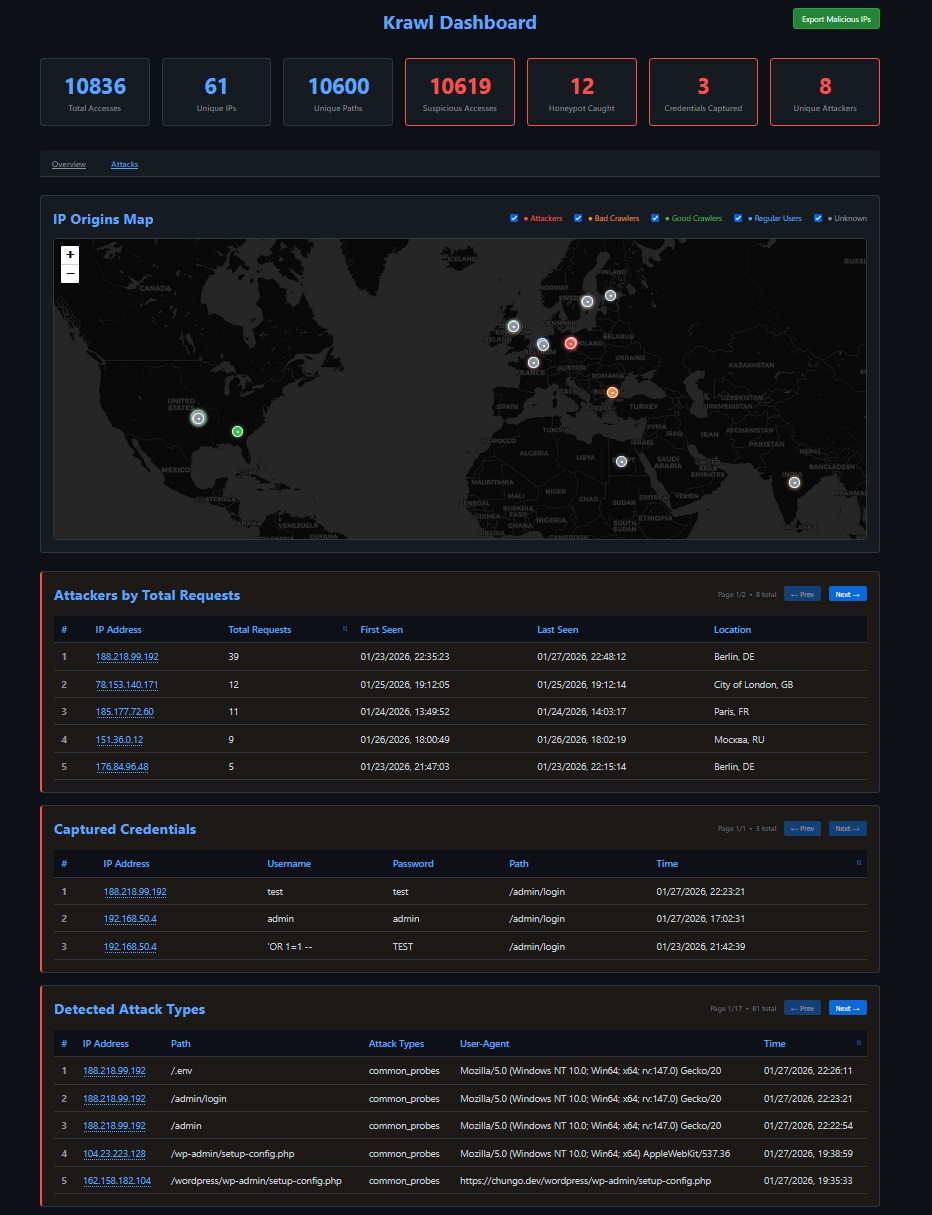

Dashboard

Access the dashboard at http://<server-ip>:<port>/<dashboard-path>

The dashboard shows:

- Total and unique accesses

- Suspicious activity and attack detection

- Top IPs, paths, user-agents and GeoIP localization

- Real-time monitoring

The attackers’ access to the honeypot endpoint and related suspicious activities (such as failed login attempts) are logged.

Krawl also implements a scoring system designed to distinguish between malicious and legitimate behavior on the website.

The top IP Addresses is shown along with top paths and User Agents

🤝 Contributing

Contributions welcome! Please:

- Fork the repository

- Create a feature branch

- Make your changes

- Submit a pull request (explain the changes!)