## Demo

Tip: crawl the `robots.txt` paths for additional fun

### Krawl URL: [http://demo.krawlme.com](http://demo.krawlme.com)

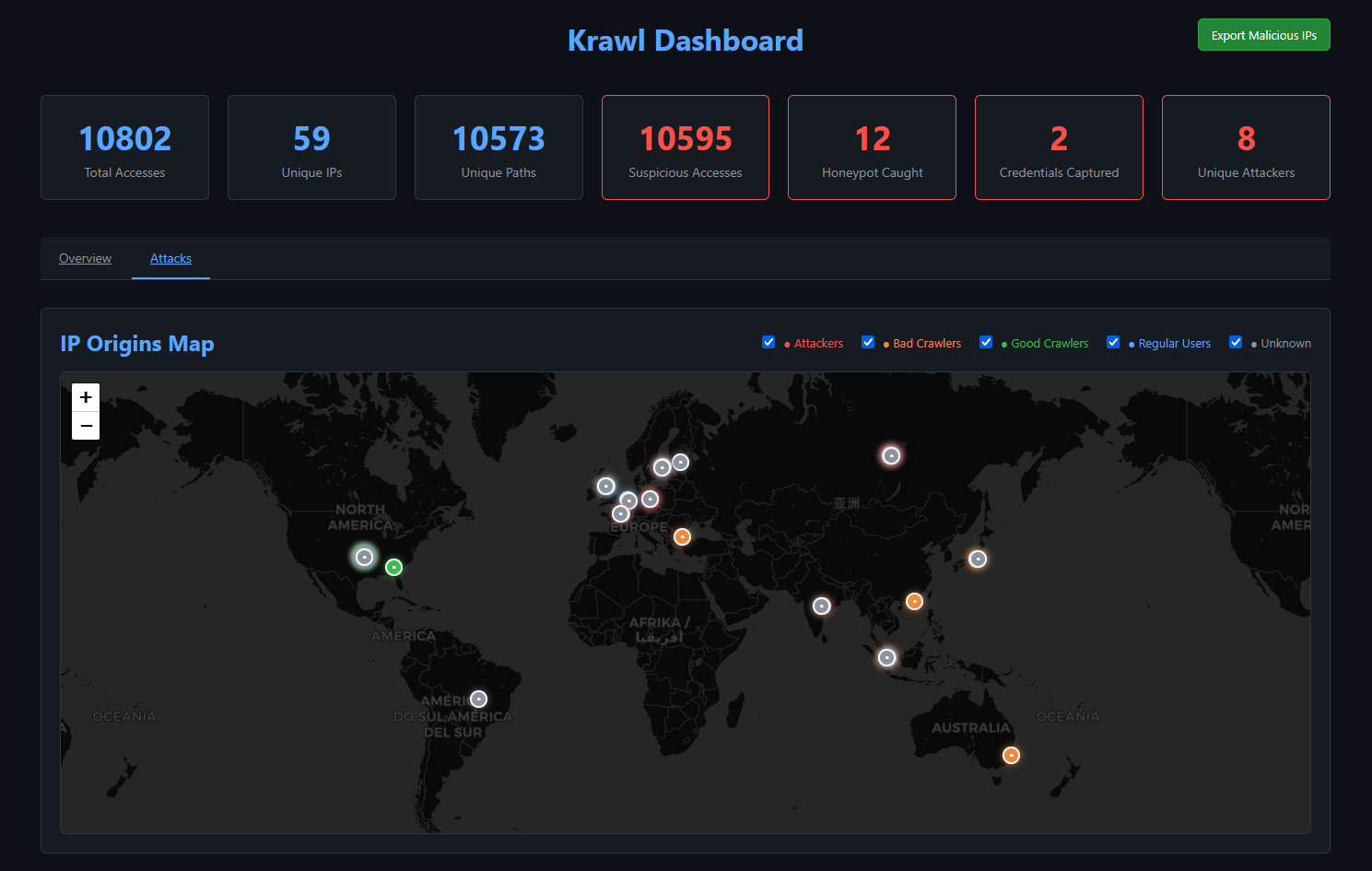

### View the dashboard [http://demo.krawlme.com/das_dashboard](http://demo.krawlme.com/das_dashboard)

## What is Krawl?

**Krawl** is a cloud‑native deception server designed to detect, delay, and analyze malicious attackers, web crawlers and automated scanners.

It creates realistic fake web applications filled with low‑hanging fruit such as admin panels, configuration files, and exposed fake credentials to attract and identify suspicious activity.

By wasting attacker resources, Krawl helps clearly distinguish malicious behavior from legitimate crawlers.

It features:

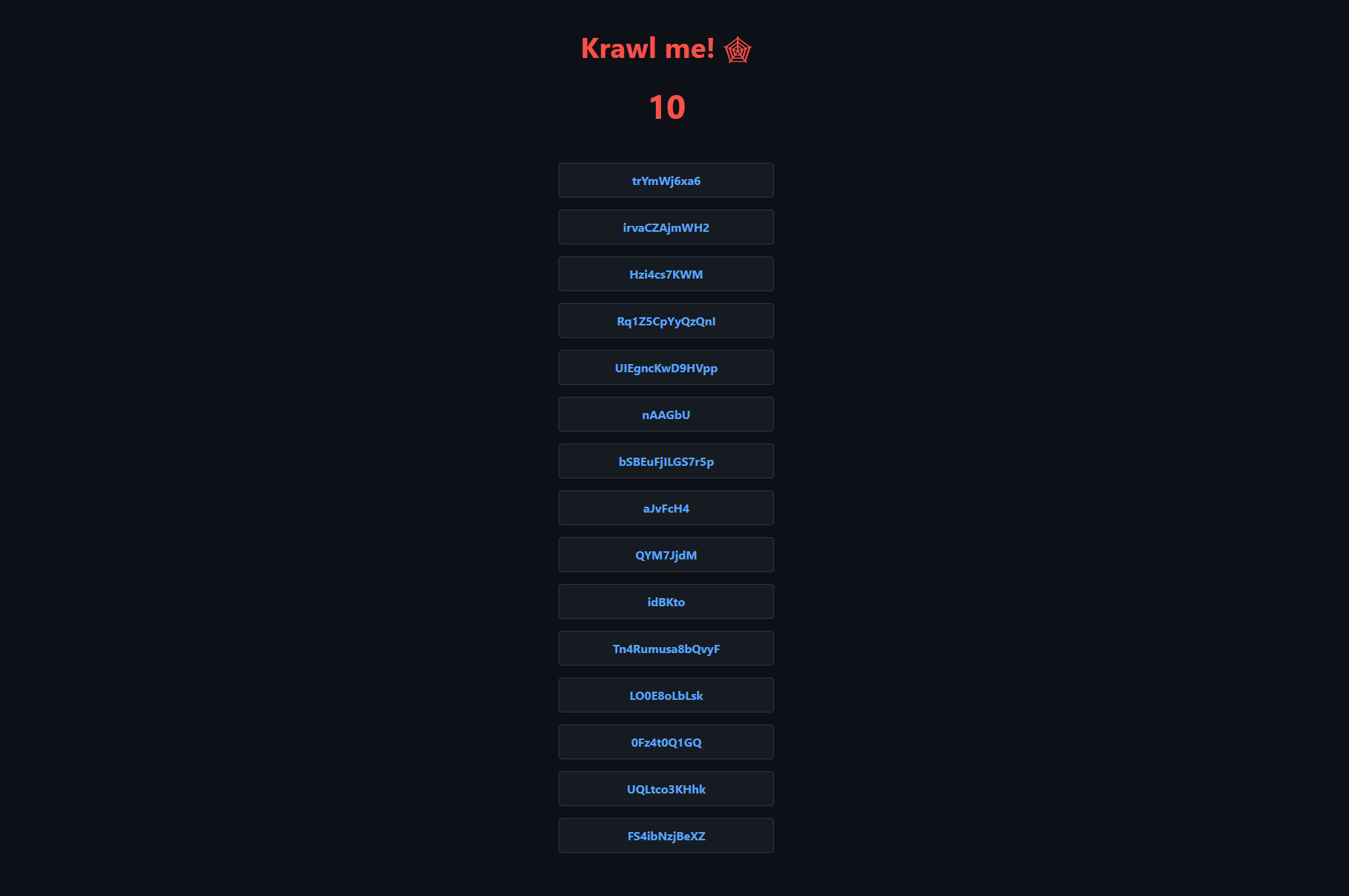

- **Spider Trap Pages**: Infinite random links to waste crawler resources based on the [spidertrap project](https://github.com/adhdproject/spidertrap)

- **Fake Login Pages**: WordPress, phpMyAdmin, admin panels

- **Honeypot Paths**: Advertised in robots.txt to catch scanners

- **Fake Credentials**: Realistic-looking usernames, passwords, API keys

- **[Canary Token](#customizing-the-canary-token) Integration**: External alert triggering

- **Random server headers**: Confuse attacks based on server header and version

- **Real-time Dashboard**: Monitor suspicious activity

- **Customizable Wordlists**: Easy JSON-based configuration

- **Random Error Injection**: Mimic real server behavior

## 🚀 Installation

### Docker Run

Run Krawl with the latest image:

```bash

docker run -d \

-p 5000:5000 \

-e KRAWL_PORT=5000 \

-e KRAWL_DELAY=100 \

-e KRAWL_DASHBOARD_SECRET_PATH="/my-secret-dashboard" \

-e KRAWL_DATABASE_RETENTION_DAYS=30 \

--name krawl \

ghcr.io/blessedrebus/krawl:latest

```

Access the server at `http://localhost:5000`

### Docker Compose

Create a `docker-compose.yaml` file:

```yaml

services:

krawl:

image: ghcr.io/blessedrebus/krawl:latest

container_name: krawl-server

ports:

- "5000:5000"

environment:

- CONFIG_LOCATION=config.yaml

- TZ=Europe/Rome

volumes:

- ./config.yaml:/app/config.yaml:ro

# bind mount for firewall exporters

- ./exports:/app/exports

- krawl-data:/app/data

restart: unless-stopped

volumes:

krawl-data:

```

Run with:

```bash

docker-compose up -d

```

Stop with:

```bash

docker-compose down

```

### Kubernetes

**Krawl is also available natively on Kubernetes**. Installation can be done either [via manifest](kubernetes/README.md) or [using the helm chart](helm/README.md).

## Use Krawl to Ban Malicious IPs

Krawl uses a reputation-based system to classify attacker IP addresses. Every five minutes, Krawl exports the identified malicious IPs to a `malicious_ips.txt` file.

This file can either be mounted from the Docker container into another system or downloaded directly via `curl`:

```bash

curl https://your-krawl-instance/